What's up with Anthropic predicting AGI by early 2027?

I operationalize Anthropic's prediction of "powerful AI" and explain why I'm skeptical

As far as I’m aware, Anthropic is the only AI company with official AGI timelines1: they expect AGI by early 2027. In their recommendations (from March 2025) to the OSTP for the AI action plan they say:

As our CEO Dario Amodei writes in ‘Machines of Loving Grace’, we expect powerful AI systems will emerge in late 2026 or early 2027. Powerful AI systems will have the following properties:

Intellectual capabilities matching or exceeding that of Nobel Prize winners across most disciplines—including biology, computer science, mathematics, and engineering.

[...]

They often describe this capability level as a “country of geniuses in a datacenter”.

This prediction is repeated elsewhere and Jack Clark confirms that something like this remains Anthropic’s view (as of September 2025). Of course, just because this is Anthropic’s official prediction2 doesn’t mean that all or even most employees at Anthropic share the same view.3 However, I do think we can reasonably say that Dario Amodei, Jack Clark, and Anthropic itself are all making this prediction.4

I think the creation of transformatively powerful AI systems—systems as capable or more capable than Anthropic’s notion of powerful AI—is plausible in 5 years and is more likely than not within 10 years. Correspondingly, I think society is massively underpreparing for the risks associated with such AI systems.

However, I think Anthropic’s predictions are very unlikely to come true (using the operationalization of powerful AI that I give below, I think powerful AI by early 2027 is around 6% likely). I do think they should get some credit for making predictions at all (though I wish the predictions were more precise, better operationalized, and they also made intermediate predictions prior to powerful AI). In this post, I’ll try to more precisely operationalize Anthropic’s prediction so that it can be falsified or proven true, talk about what I think the timeline up through 2027 would need to look like for this prediction to be likely, and explain why I think the prediction is unlikely to come true.

[Thanks to Ajeya Cotra, Ansh Radhakrishnan, Buck Shlegeris, Daniel Kokotajlo, Eli Lifland, James Bradbury, Lukas Finnveden, and Megan Kinniment for comments and/or discussion.]

What does “powerful AI” mean?

Anthropic has talked about what powerful AI means in a few different places. Pulling from the essay by Dario Amodei Machines of Loving Grace5:

In terms of pure intelligence, it is smarter than a Nobel Prize winner across most relevant fields – biology, programming, math, engineering, writing, etc. This means it can prove unsolved mathematical theorems, write extremely good novels, write difficult codebases from scratch, etc.

In addition to just being a “smart thing you talk to”, it has all the “interfaces” available to a human working virtually, including text, audio, video, mouse and keyboard control, and internet access. It can engage in any actions, communications, or remote operations enabled by this interface, including taking actions on the internet, taking or giving directions to humans, ordering materials, directing experiments, watching videos, making videos, and so on. It does all of these tasks with, again, a skill exceeding that of the most capable humans in the world.

It does not just passively answer questions; instead, it can be given tasks that take hours, days, or weeks to complete, and then goes off and does those tasks autonomously, in the way a smart employee would, asking for clarification as necessary.6

The resources used to train the model can be repurposed to run millions of instances of it (this matches projected cluster sizes by ~2027), and the model can absorb information and generate actions at roughly 10x-100x human speed. It may however be limited by the response time of the physical world or of software it interacts with.

We could summarize this as a “country of geniuses in a datacenter”.

It’s not entirely clear if the term “powerful AI” is meant to refer to some reasonably broad range of capability where the prediction is intended to refer to the start of this range and this text from Machines of Loving Grace is supposed to refer to a central or upper part of this range. However, the discussion in the recommendations to the OSTP is pretty similar, which implies that the prediction corresponds to a version of “powerful AI” matching this description and given Anthropic’s communications it would be pretty misleading if this wasn’t roughly the description that is supposed to go along with the prediction.

While some aspects of “powerful AI” are clear, the descriptions given don’t fully clarify key aspects of this level of capability. So I’ll make some inferences and try to make the description more precise. If I’m wrong about what is being predicted, hopefully someone will correct me!7

In particular, it seems important to more precisely operationalize what things powerful AI could automate. Based on Dario’s description (which includes a high bar for capabilities and includes being able to run many copies at pretty high speeds), I think powerful AI would be capable of:

Fully or virtually fully automating AI R&D. As in, it would be able to autonomously advance AI progress8 without human help9 at a rate at least comparable to how fast AI progress would proceed with human labor.10

Being able to fully or virtually fully automate work on scientific R&D that could be done remotely within most companies/labs in most of the relevant fields (after being given sufficient context). As in, not necessarily being able to automate all such companies at once, but for most relevant fields, the AIs can virtually fully automate work that can be done remotely for any given single company (or at least the large majority of such companies). Correspondingly, the AIs would be capable of automating at least much of cognitive labor involved in R&D throughout the economy (though there wouldn’t necessarily be the compute to automate all of this at once).

Being able to automate the vast majority of white-collar jobs that can be done remotely (or tasks within white collar jobs that can be done remotely). Again, this doesn’t mean all such jobs could be automated at once as there might not be enough compute for this, but based on Dario’s description it does seem like there would be enough instances of AI that a substantial fraction (>25%?) of white collar work that can be done remotely in America could be automated (if AI capacity was spent on this and regulation didn’t prevent this).

Supposing that the people making this prediction don’t dispute this characterization, we can consider the prediction clearly falsified if AIs obviously don’t seem capable of any of these by the start of July 2027.11 Minimally, I think the capacity for AIs to fully or virtually fully automate AI R&D seems like it would pretty clearly be predicted and this should be relatively easy to adjudicate for at least AI company employees. The other types of automation could be messier and slower to adjudicate12 and adjudication on publicly available evidence could be delayed if the most powerful AIs aren’t (quickly) externally deployed.13

Regardless, I currently expect the prediction to be clearly falsified by the middle of 2027. I do expect we’ll see very impressive AI systems by early 2027 that perhaps accelerate research engineering within frontier AI companies by around 2x14 and that succeed more often than not in autonomously performing tasks that would take a skilled human research engineer within the company (who doesn’t have that much context on the specific task) a full work day.15

Another question that is worth clarifying is what probability Anthropic is assigning to this prediction. Some of Anthropic’s and Dario’s statements sound more like a >50% probability (e.g. “we expect”)16 while others sound more like predicting a substantial chance (>25%?) with words like “could” or “pretty well on track”. For now, I’ll suppose they intended to mean that there was around a 50% probability of powerful AI by early 2027. A calibrated forecast of 50% probability has a substantial chance of being wrong, so we shouldn’t update too hard based on just this prediction being falsified in isolation. However, if the prediction ends up not coming true, I do think it’s very important for proponents of the prediction to admit it was falsified and update.17

Earlier predictions

Unfortunately, waiting until the middle of 2027 to adjudicate this isn’t ideal. If the prediction is wrong, then we’d ideally be able to falsify it earlier. And if it is right, then hopefully we’d be able to get some sign of this earlier so that we can change our plans based on the fact that wildly transformative (and dangerous) AI systems will probably be created by early 2027! Are there earlier predictions which shed some light on this? Ideally, we’d have earlier predictions that I (and others) don’t expect to come true but Anthropic does expect to come true and ideally they would also suffice to update me (and others) substantially towards Anthropic’s perspective on timelines to powerful AI.

Dario has said (source) that he expects 90% of code to be written by AI sometime between June 2025 and September 2025 and that “we may be in a world where AI is writing essentially all of the code” by around March 2026.18 My understanding is that the prediction that 90% of code will be written by AIs hasn’t come true, though the situation is somewhat complicated. I discuss this much more here.

Regardless, I think that “fraction of (lines of) code written by AIs” isn’t a great metric: it’s hard to interpret because there isn’t a clear relationship between fraction of lines written by AI and how much AIs are increasing useful output. For instance, Anthropic employees say that Claude is speeding them up by “only” 20-40% based on some results in the Sonnet 4.5 system card despite AIs writing a reasonably high fraction of the code (probably a majority of committed code and a larger fraction for things like single-use scripts). And “AI is writing essentially all of the code” is compatible with a range of possibilities that differ in their implications for productivity.

Unfortunately, Anthropic (and Dario) haven’t made any other predictions (as far as I am aware) for what they expect to happen towards the beginning of 2026 if they are right about expecting powerful AI by early 2027. It would be great if they made some predictions. In the absence of these predictions, I’ll try to steelman some version of this view and talk about what I think we should expect to have happen by various points given this view. I’ll assume that they expect reasonably smooth/continuous progress rather than expecting powerful AI by early 2027 due to anticipating a massive breakthrough or something else that would cause a large jump in progress. Thus, we should be able to work backward from seeing powerful AI by early 2027 towards earlier predictions.

A proposed timeline that Anthropic might expect

I’ll first sketch out a timeline that involves powerful AI happening a bit after the start of 2027 (let’s suppose it’s fully done training at the start of March 2027) and includes some possible predictions. It’s worth noting that if their view is that there is a (greater than) 50% chance of powerful AI by early 2027, then presumably this probability mass is spread out at least a bit and they put some substantial probability on this happening before 2027. Correspondingly, the timeline I outline is presumably around median as far as how aggressive it is relative to their expectations (assuming that they do in fact put >50% on powerful AI by early 2027) while they must put substantial weight (presumably >25%) on substantially more aggressive timelines where powerful AI happens before October 2026 (~11 months from now).

I’ll work backwards from powerful AI being done training at the start of March 2027. My main approach for generating this timeline is to take the timeline in the AI 2027 scenario and then compress it down to take 60% as much time to handle the fact that we’re somewhat behind what AI 2027 predicted for late 2025 and powerful AI emerges a bit later than March 2027 in the AI 2027 scenario. I explain my process more in “Appendix: deriving a timeline consistent with Anthropic’s predictions”.

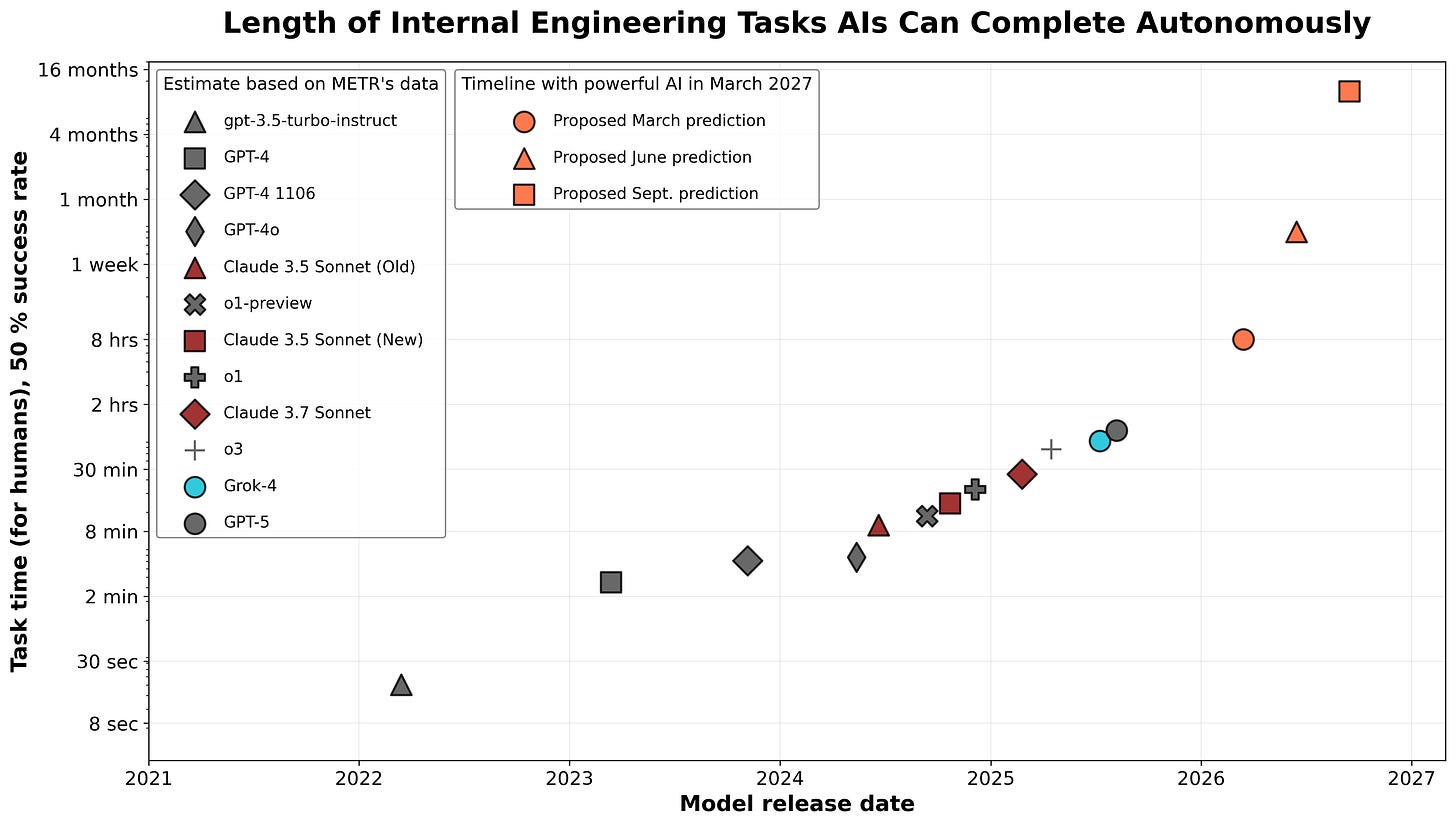

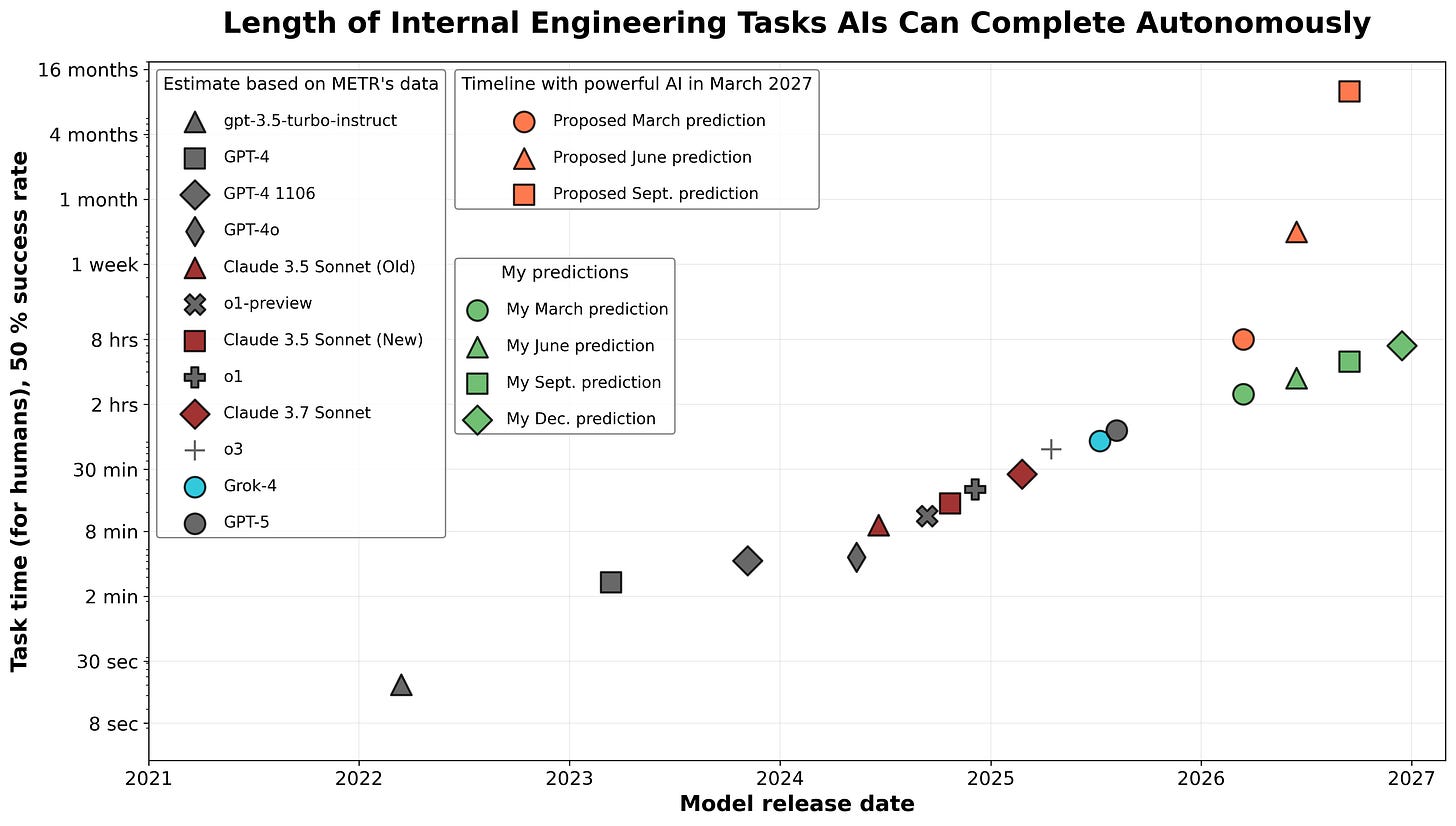

Figure 1: I’ve plotted predictions for the length of engineering tasks within the AI company that AIs can complete autonomously from the proposed timeline with estimates for historical values for this quantity based on METR’s time-horizon data. While these estimates are based on METR’s time-horizon data, the quantity being estimated is not the same (internal engineering tasks rather than results on a benchmark), so the numbers diverge. I’m converting from METR time-horizon to a guess at “internal engineering task time-horizon” by multipliying by 0.5.19 If this remains a reasonable approximation going forward (and is a reasonable approximation now), then these predictions can be tested by multiplying future 50%-reliability time horizons by 0.5 and comparing.

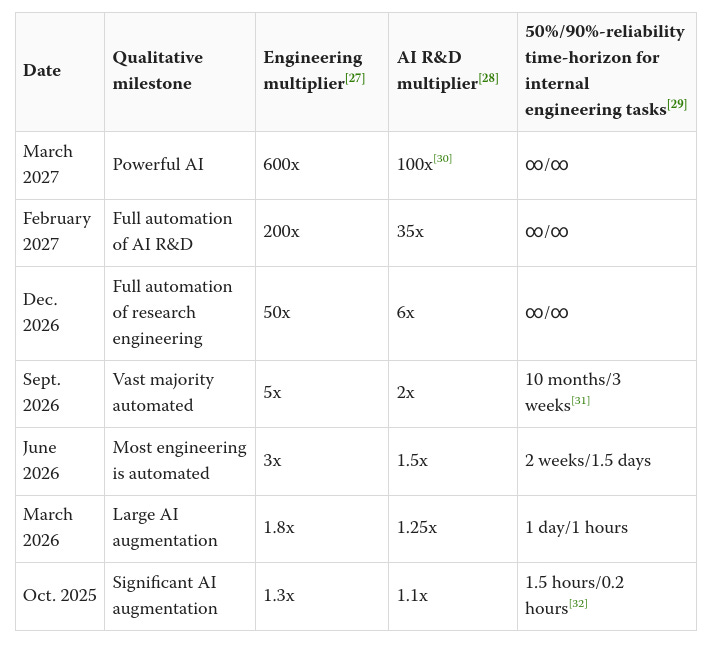

Here’s a qualitative timeline (working backward):

March 2027: Powerful AI is built (see operationalization above). Getting to this milestone required massive acceleration of AI R&D progress as discussed in earlier parts of the timeline.

February 2027: AIs can now fully (or virtually fully) automate AI R&D. AI R&D is proceeding much faster due to this automation and even prior to full automation there was large acceleration. Performance in domains other than AI R&D lags behind somewhat, but with AIs accelerating AI development, there is rapid improvement in how little data is required for AIs to perform well in some domain (both due to general-purpose learning abilities and domain-specific adaptations) and AIs are also accelerating the process of acquiring relevant data.

December 2026: AIs can now fully (or virtually fully) automate research engineering and can complete work much faster than humans and at much greater scale.20 This required patching the remaining holes in AI’s skill profiles, but this could happen quickly with acceleration from AI labor. AI R&D is substantially accelerated and this acceleration picks up substantially from here allowing us to quickly reach powerful AI just 3.5 months later. A bit earlier than this (perhaps in October or November), AIs became capable of usually completing massive green-field (as in, from scratch) easy-to-check software projects like reimplementing the Rust compiler from scratch in C while achieving similar performance (in compilation time and executable performance).21

September 2026: Research engineers are now accelerated by around 5x22 and other types of work are also starting to substantially benefit from AI automation. The majority of the time, AIs successfully complete (randomly sampled)23 engineering tasks within the AI company that would take decent human engineers many months and can complete the vast majority (90%) of tasks that would take a few weeks. These numbers assume the human engineer we’re comparing to doesn’t have any special context on the task but does have the needed skill set to complete the task.24 AIs still fail to complete many tasks that only the best software engineers can do in a week and have a bunch of holes in their skill profile that prevent perfect reliability on even pretty small tasks. But they are now pretty good at noticing when they’ve failed or will be unable to complete some task. Thus, with a bit of management, they can functionally automate the job of a junior research engineer (though they need help in a few places where most human employees wouldn’t and are much better than a human employee on speed and some other axes).

June 2026: Research engineers are accelerated by almost 3x. When given pretty hard, but relatively self-contained tasks like “write an efficient and production-ready inference stack for Deepseek V3 for Trainium (an AI chip developed by Amazon)”, AIs usually succeed.25 AIs succeed the majority of the time at engineering tasks that would typically take employees 2 weeks and succeed the vast majority of the time on tasks that take a day or two. AIs are now extremely reliable on small (e.g. 30 minute) self-contained and relatively easy-to-check tasks within the AI company, though aren’t totally perfect yet; they’re more reliable than the vast majority of human engineers at these sorts of tasks even when the humans are given much more time (e.g. 1 week).

March 2026: Research engineers are accelerated by 1.8x. AIs succeed the majority of the time at tasks that would have taken an engineer a day and perform somewhat better than this on particularly self-contained and easy-to-check tasks. For instance, AIs usually succeed at autonomously making significant end-to-end optimizations to training or inference within the company’s actual codebase (in cases where human experts would have been able to succeed at a similar level of optimization in a few days).26 AIs have been given a lot of context on the codebase allowing them to pretty reliably zero-shot small self-contained tasks that someone who was very familiar with the relevant part of the codebase could also zero-shot. Engineers have figured out how to better work with AIs and avoid many productivity issues in AI-augmented software engineering that were previously slowing things down and top-down efforts have managed to diffuse this through the company. Now, humans are writing very little code manually and are instead managing AIs.

October 2025: Research engineers are perhaps accelerated by 1.3x. This is right now. (This productivity multiplier is somewhat higher than what I expect right now, but perhaps around what Anthropic expects?)

Here’s a quantitative timeline. Note that this timeline involves larger productivity multipliers than I expect at a given level of capability/automation, but I think this is more consistent with what Anthropic expects.

I’ve focused on accelerating engineering (and then this increasingly accelerating AI R&D) as I think this is a key part of Anthropic’s perspective while also being relatively easy to track. Accelerating and automating engineering is also key given my views though perhaps a bit less central.

Why powerful AI by early 2027 seems unlikely to me

As stated earlier, I think powerful AI by early 2027 is around 6% likely, so pretty unlikely.33 (I think powerful AI happening this soon probably requires an algorithmic breakthrough that causes much faster AI progress than the current trend.34) To be clear, this probability is still high enough that it is very concerning!

Trends indicate longer

My main reason for thinking this is unlikely is that this would require progress that is way faster than various trends indicate.

METR has done work demonstrating a pretty long-running exponential trend in the length of software engineering tasks that AIs can complete half of the time.35 This trend predicts that by the end of 2026, AIs will be able to complete easy-to-check benchmark style tasks from METR’s task suite that take 16 hours around 50% of the time and tasks that take 3 hours around 80% of the time. While METR’s task suite is imperfect, my understanding is that we observe broadly similar or lower time horizons on other distributions of at least somewhat realistic software engineering tasks (including people trying to use AIs to help with their work). Naively, I would expect that AIs perform substantially worse on randomly selected engineering tasks within AI companies than on METR’s task suite. (To get the human duration for a task, we see how long it takes engineers at the company without special context but with the relevant skill set.) So the trend extrapolation predicts something much less aggressive for December 2026 than what happened in the above timeline (full automation of research engineering) and more generally the trend predicts powerful AI (which happens after automation of engineering) is further away.

Figure 1 (above) shows how the proposed timeline requires far above-trend progress.

Other trends support that we’re unlikely to see powerful AI by early 2027. This is my sense from qualitative extrapolation based on AI usefulness and benchmarks (as in, it doesn’t feel like another year or so of progress suffices for getting that close to powerful AI). I also think naive benchmark extrapolations of benchmarks much easier than automating engineering within AI companies (e.g. SWE-bench, RE-bench, terminal-bench) look like they will probably take another year or more to saturate. I expect a pretty large gap between saturating these easy benchmarks and fully automating engineering in AI companies (less than a year seems unlikely at the current pace of progress, a few years seems plausible).

My rebuttals to arguments that trend extrapolations will underestimate progress

One possible objection to these trend extrapolations is that you expect AI R&D to greatly accelerate well before full automation of engineering, resulting in above-trend progress. I’m skeptical of this argument as I discuss in this prior post. In short: AIs can speed up engineering quite a bit before this results in massive changes to the rate of AI progress, and for this to yield powerful AI by early 2027, you really need a pretty massive speedup relatively soon.

To be clear, I do think timelines to powerful AI are substantially shortened by the possibility that AI R&D automation massively speeds up progress; I just think we only see a large speedup at a higher level of capability that is further away. (This massive speedup isn’t guaranteed, but it seems likely to me and that makes shorter timelines much more likely.)

Another possible objection is that we haven’t yet done a good version of scaling up RL and once people figure this out early next year, we’ll see above-trend progress. I argue against this in another post.

Another objection is that you expect inherent superexponentiality in the time-horizon trend and you expect this to kick in strongly enough within the next 12 months (presumably somewhere around 2-hour to 8-hour 50% reliability time-horizon) to yield full automation of research engineering by the end of 2026. This would require very strong superexponentiality that almost fully kicks in within the next two doublings, so it seems unlikely to me. I think this can be made roughly consistent with the historical trend with somewhat overfit parameters, but it still requires a deviation from a simpler and better fit to the historical trend within a pretty small (and specific!) part of the time-horizon curve.

Another objection is that you expect a massive algorithmic breakthrough that results in massively above trend progress prior to 2027. This is a pretty specific claim about faster than expected progress, so I’m skeptical by default. I think some substantial advances are already priced in for the existing trend. The base rate also isn’t that high: massive (trend-breaking) breakthroughs seem to happen at a pretty low rate in AI, at least with respect to automation of software engineering (more like every 10 years than every couple of years).36

Another counterargument I’ve recently heard that I’m more sympathetic to goes something like:

Look, just 1.5 years ago AIs basically couldn’t do agentic software engineering at all.37 And now they’re actually kind of decent at all kinds of agentic software engineering. This is a crazy fast rate of progress and when I qualitatively extrapolate it really seems to me like in another 1.5 years or so AIs will be able to automate engineering. I don’t really buy this time-horizon trend or these other trends. After all, every concrete benchmark you can mention seems like it’s going to saturate in a year or two and your argument depends on extrapolating beyond these benchmarks using abstractions (like time-horizon) I don’t really buy. Besides, companies haven’t really optimized for time-horizon, so once they get AIs to be decent agents on short-horizon tasks (which is pretty close), they’ll just explicitly optimize for getting AIs good at completing longer tasks and this will happen quickly. After all, the AIs seem pretty close when I look at them and a year of progress is really a lot.

I’m somewhat sympathetic to being skeptical of the trend extrapolations I gave above because AIs haven’t seriously been doing agentic software engineering for very long (getting a longer period for the trend requires looking at models that can barely do agentic software engineering). More generally, we shouldn’t put that much weight on the time-horizon abstraction being a good way to extrapolate (for instance, the trend has only been making predictions for a short period and selection effects in finding such a trend could be significant). This pushes me toward being more uncertain and putting more weight on scenarios where AIs are massively (>10x) speeding up engineering in AI companies by the end of 2026.38

That said, even if AIs are fully automating engineering in AI companies by the end of 2026, I still think powerful AI by early 2027 is less likely than not. And more generally, I think there are some reasons to expect additional delays as I’ll discuss in the next section.

Naively trend extrapolating to full automation of engineering and then expecting powerful AI just after this is probably too aggressive

One forecasting strategy would be to assume AIs can fully automate engineering once they can do multi-month tasks reliably, trend extrapolate to this point using the METR trend, and then expect powerful AI a short period after this. I think this will result in overly aggressive predictions. I’m effectively using this strategy as a loose/approximate lower bound on how soon we’ll see powerful AI, but I think there are good reasons to think things might take longer.

One important factor is that time horizons on METR’s task suite are probably substantially higher than in practice time horizons for satisfactorily completing (potentially messy) real world tasks within AI companies. (For instance, see here and here.) One complication is that AIs might be particularly optimized to be good at tasks within AI companies (via mechanisms like having a fine-tuned AI that’s trained on the AI company’s codebase and focusing RL on AI R&D tasks).

Another related factor is that time horizons are measured relative to pretty good human software engineers, but not the best human research engineers. Full automation of engineering requires beating the best human engineers at the hardest tasks and even just massively speeding up overall engineering (e.g. by 10x) might require the same. Part of this is that some tasks are harder than other tasks (at least for humans) and require a much better engineer to complete them, at least to get the task done in a reasonable amount of time. Thus, 50% reliability at some time horizon relative to decent human engineers might still imply much worse performance at that same time horizon than the best research engineers in AI companies, at least at hard tasks. In general, AI often seems to take a while (as in, more than a year) to go from beating decent human professionals at something to beating all human professionals.

I also expect last mile problems for automation where a bunch of effort is needed to get AIs good at the remaining things that AI is still bad at that are needed to automate engineering, AI R&D, or other professions (this might be priced into trends like the METR time horizon trend39 or it might not). Another way to put this is that there will probably be a somewhat long tail of skills/abilities that are needed for full automation (but that aren’t needed for most moderate length tasks) and that are particularly hard to get AIs good at using available approaches. This means there might be a substantial gap between “AIs can almost do everything in engineering and can do many extremely impressive things” and “AIs can fully or virtually fully automate engineering in AI companies”. I do think this gap will be crossed faster than you might otherwise expect due to AIs speeding up AI R&D with partial automation (particularly partial automation of engineering, but somewhat broader than this). However, partial automation of engineering that results in engineers being effectively 10x faster (a pretty high milestone!) might only make AI progress around 70% faster.40

I also think it’s reasonably likely that there is a decently big gap (e.g. >1 year) between fully automating engineering and powerful AI, though I’m sympathetic to arguments that there won’t be a big gap. For powerful AI to come very shortly after full automation of engineering, the main story would be that you get to full automation of AI R&D shortly after fully automating engineering (because the required amount of further progress is small and/or fully automating engineering greatly speeds up AI progress) and that full automation of AI R&D allows for quickly getting AIs that can do ~anything (which is required for powerful AI as defined above). But, this story might not work out and we might have a while (1-4 years?) between full or virtually full automation of engineering in AI companies and powerful AI.

What I expect

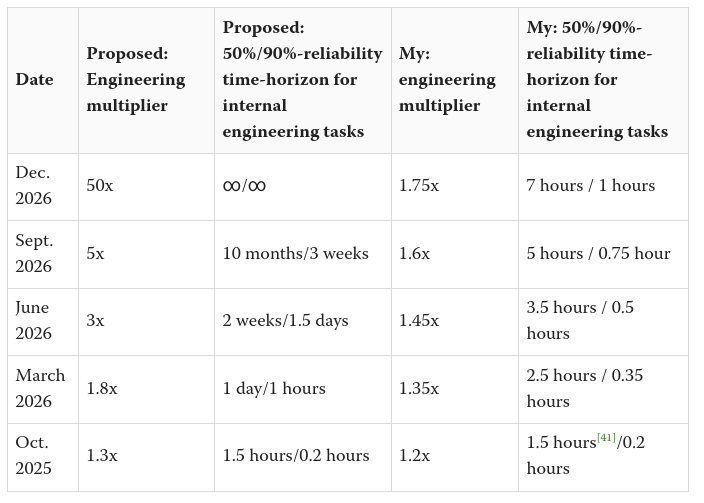

Here is a table comparing my quantitative predictions for 2026 to what we see for the proposed timeline consistent with Anthropic’s predictions that I gave above:

[Table footnotes41]

Figure 2: A comparison between my predictions and the predictions from the proposed timeline. Note that the historical values based on METR’s data are estimates for this quantity (see the footnote on the caption for Figure 1 for details).

My quantitative predictions are mostly me trying to extrapolate out trends. This is easiest for 50%/90% reliability time-horizon as we have some understanding of the doubling time.42

Notably, my predictions pretty quickly start to deviate pretty far from the proposed timeline I gave earlier that yields powerful AI in March 2027. Thus, it should be reasonably doable to update a decent amount throughout 2026 if my timeline is a reasonable characterization of Anthropic’s perspective.

As far as more qualitative predictions, I generally expect December 2026 to look similar to the description of March 2026 I gave in my proposed timeline above (as in, the proposed timeline matching Anthropic’s predictions). (I generally expect a roughly 3-4x slower progression than the proposed timeline matching Anthropic’s predictions, at least in the next year or two and prior to large accelerations in AI R&D.)

What updates should we make in 2026?

If something like my median expectation for 2026 happens

Suppose that what we see in 2026 looks roughly like what I expect (the ~median outcome), with AIs capable of substantially accelerating engineering in AI companies (by 1.75x!) and typically performing near day-long tasks by the end of 2026.43 How should various people update?

I’ll probably update towards slightly later timelines44 and a somewhat lower probability of seeing faster than trend progress (prior to massive automation of AI R&D, e.g. close to full automation of research engineering). This would cut my probability of seeing full automation of AI R&D prior to 2029 by a decent amount (as this would probably require faster than trend progress).45 However, I’d also update toward the current paradigm continuing to progress at a pretty fast clip and this would push towards expecting powerful AI in the current paradigm within 15 years (and probably within 10).

How should Anthropic update? I think this would pretty dramatically falsify their current perspectives, so they should update towards putting much more weight on figuring out what trends to extrapolate, towards extrapolating something like the time-horizon trend in particular, and towards being somewhat more conservative in general. They should also admit their prediction was wrong (and hopefully make more predictions about what they now expect in the future so their perspective is clear). It should probably be pretty clear that they are going to be wrong (given the information they have access to) by the end of 2026 and probably they can get substantial evidence of their prediction being wrong earlier (by the middle of 2026 or maybe even right now?).

In practice, it might be tricky to adjudicate various aspects of my predictions (e.g. the speedup to engineers in AI companies).

If something like the proposed timeline (with powerful AI in March 2027) happens through June 2026

If in June 2026, AIs are accelerating research engineers by something like 3x (or more) and are usually succeeding in completing multi-week tasks within AI companies (or something roughly like this), then I would update aggressively toward shorter timelines though my median to powerful AI would still be after early 2027. Here’s my guess for how I’d update (though the exact update would depend on other details): I’d expect that AI will probably be dramatically accelerating engineering by the end of 2026 (probably >10x), I’d have maybe 20% on full AI R&D automation by early 2027 (before May), and my median for full AI R&D automation would probably be pulled forward to around mid-2029. (I’d maybe put 15% on powerful AI by early 2027, 25% by mid-2028, and 50% by the start of 2031, though I’ve thought a bit less about forecasting powerful AI specifically.)

I don’t really know exactly how Anthropic should update, but presumably under their current views they should gain somewhat more confidence in their current perspective.

If AI progress looks substantially slower than what I expect

It seems plausible that AI progress will obviously be slower in 2026 or that we’ll determine that even near the end of 2026 AIs aren’t seriously speeding up engineers in AI companies (or are maybe even slowing them down). In this case, I’d update towards longer timelines and a higher chance that we won’t see powerful AI anytime soon. Presumably Anthropic should update even more dramatically than if what I expect happens. (It’s also possible this would correspond to serious financial issues for AI companies, though I’d guess probably even somewhat slower progress than what I expect would suffice for continuing high levels of investment.)

If AI progress is substantially faster than I expect, but slower than the proposed timeline (with powerful AI in March 2027)

If progress is somewhat faster than I expect, I’d update towards AI progress accelerating more and earlier than I expected (as in, accelerated when represented in trends/metrics I’m tracking; it might not be well understood as accelerating if you were tracking the right underlying metric). I’d generally update towards shorter timelines and a higher probability of the current paradigm (or something similar) resulting in powerful AI. I think Anthropic should update towards longer timelines, but this might depend on the details.

Appendix: deriving a timeline consistent with Anthropic’s predictions

I’ll pull some from the AI 2027 timeline and takeoff trajectory, as my understanding is that this sort of takeoff trajectory roughly matches Anthropic’s/Dario’s expectations (at least up until roughly powerful AI level capability, possibly Anthropic expects a slower industrial takeoff). Even if they reject other aspects of the AI 2027 takeoff trajectory, I think AI greatly accelerating AI R&D (or some other type of self-improvement loop) is very likely needed to see powerful AI by early 2027, so at least this aspect of the AI 2027 trajectory can safely be assumed. (Hopefully Anthropic is noticing that their views about timelines also imply a high probability of an aggressive software-only intelligence explosion and they are taking this into account in their planning!)

Based on the operationalization I gave earlier, powerful AI is more capable than the notion of Superhuman AI Researcher used in AI 2027, but somewhat less capable than the notion of Superhuman Remote Worker. I’ll say that powerful AI is halfway between these capabilities and in the AI 2027 scenario, this halfway point occurs in September 2027. We need to substantially compress this timeline relative to AI 2027, because we’re instead expecting this to happen in March 2027 rather than September (6 months earlier) and also the current level of capabilities we see (as of October 2025) are probably somewhat behind the AI 2027 scenario (I think roughly 4 months behind46). This means the timeline takes around 60% as long as it did in AI 2027.47

In AI 2027, the superhuman coder level of capability is reached 6 months before powerful AI occurs, so this will be 3.5 months before March 2027 in our timeline. From here, I’ve worked backward filling in relevant milestones to interpolate to superhuman coder with the assumption that progress is speeding up some over time. I was a bit opinionated in adjusting the exact numbers and details.

Recently (in fact, after I initially drafted this post), OpenAI expressed a prediction/goal of automated AI research by March 2028. Specifically, Jakub Pachocki said: “...anticipating this progress, we of course make plans around it internally. And we want to provide some transparency around our thinking there. And so we want to take this maybe somewhat unusual step of sharing our internal goals and goal timelines towards these very powerful systems. And, you know, these particular dates, we absolutely may be quite wrong about them. But this is how we currently think. This is currently how we plan and organize.” It’s unclear what confidence in this prediction OpenAI is expressing and how much it is a prediction rather than just being an ambitious goal. The arguments I discuss in this post are also mostly applicable to this prediction.

In this post, I’ll often talk about Anthropic as an entity (e.g. “Anthropic’s prediction”, “Anthropic thinks”, etc.). I of course get that Anthropic isn’t a single unified entity with coherent beliefs, but I still think talking in this way is reasonable because there are outputs from Anthropic expressing “official” predictions and because Dario does in many ways represent and lead the organization and does himself have beliefs. If you want, you can imagine replacing “Anthropic” with “Dario” in places where I refer to Anthropic as an entity in this post.

In fact, I’m not aware of anyone at Anthropic other than Jack Clark and Dario who have timelines this short, though I think many people expect only somewhat longer and the discussion in this post is still applicable to somewhat longer timelines.

It’s possible that Dario/Anthropic have updated towards longer timelines, but if so, there isn’t public evidence of this.

I cut a few of the bullets that seemed less relevant for brevity.

It’s unclear to me whether Dario means the AI does tasks that would take an experienced human hours/days/weeks or that the AI is autonomously working for hours/days/weeks without human involvement (possibly completing tasks which are much larger in scope because AIs likely work faster than humans at tasks that they are good at).

I normally forecast to more specific milestones like “AIs capable of full automation of AI R&D”, but I’ll stick with powerful AI for this post.

At least AI progress given a fixed supply of data and compute, the AIs wouldn’t necessarily be able to automate collecting data from the physical world insofar as this is important. I’m skeptical that this is that important. I also think Anthropic doesn’t think this is that important, at least my guess is that they probably don’t think spending lots of time acquiring additional data is necessary for very high levels of capability.

Beyond e.g. rarely asking humans about how to resolve various trade-offs between priorities that come up. Or perhaps very rare involvement by humans that doesn’t substantially bottleneck progress.

Perhaps Dario intends “smarter than a Nobel Prize winner” to not include the level of cognitive diversity within a large company and he also thinks this cognitive diversity is critical for AI progress or progress in other scientific domains. In this case, the AI would be able to automate any given job, but not automate whole companies without a bunch of human help. I’ll assume this isn’t what Dario means because it would be incongruous with usage of the term “country of geniuses in a datacenter”.

I interpret “early 2027” as “within the first third of 2027”, but we could charitably interpret “early 2027” as “within the first half of 2027”.

This is because giving the AI the relevant knowledge to do those jobs could take some time and diffusion might not happen that quickly (while I expect that AI companies will try hard to automate AI R&D as soon as they can, as much as they can). (It could still be easy to quickly adjudicate if AIs end up substantially more capable than needed for automating scientific R&D in most of the relevant fields.)

It’s worth clarifying that the prediction made by Anthropic isn’t (as far as I can tell) that powerful AIs will necessarily be externally deployed by early 2027 (they could be kept secret inside of AI companies), but I currently expect strong public evidence of these capabilities within a short period regardless. This is both because I expect external deployment of powerful AI (or at least systems close to powerful AI) within a short period and even in the absence of external deployment, we may be able to get strong evidence via other routes (e.g. transparency resulting in credible evidence).

By accelerating research engineering by 2x, I mean “the acceleration to research engineering activities is as valuable to the company as it would be to have all of their research engineers operate 2x faster when doing work that is reasonably centrally research engineering work (including parts of their job which aren’t coding like high level software design and meetings, though AIs wouldn’t necessarily have to accelerate every part of the job for an overall 2x boost)”. Note that accelerating research engineering by 2x results in a substantially less than 2x acceleration to overall AI progress. See here for discussion. This is also different from the notion of AI R&D labor acceleration I define here (the notion discussed in the linked post is a broader notion that includes all labor, not just engineering). Also, note that when I said “2x acceleration to research engineering”, I mean “2x acceleration to things that are best described as research engineering work (but still including messy aspects of these jobs, e.g. not just literal coding)”.

I also expect that by early 2027 (start of May 2027) annualized AI company revenue will be around $100 billion and that reasonably large fractions of software engineering outside of AI companies will be transformed substantially, though to a lesser extent than within AI companies.

Strangely, in their blog post about their recommendations to the OSTP Anthropic says something substantially stronger than what they say elsewhere: “As our CEO Dario Amodei writes in ‘Machines of Loving Grace’, we expect powerful AI systems will emerge in late 2026 or early 2027”. Dario doesn’t say this in Machines of Loving Grace, instead he says a substantially weaker statement: “I think it could come as early as 2026, though there are also ways it could take much longer.”. Further, in their actual submission to the OSTP, they say “Based on current research trajectories, we anticipate that powerful AI systems could emerge as soon as late 2026 or 2027.” which is also weaker. The submission to the OSTP did say “Powerful AI technology will be built during this Administration.” which implies a pretty high probability (maybe 80%?) on powerful AI prior to January 2029 and thus probably a decently high probability by the middle of 2027 (only 1.5 years earlier), though this could be consistent with thinking powerful AI by early 2027 is only plausible (e.g. 25% likely).

I would also feel substantially more sympathetic (and retain more weight on the views of the relevant people) if intermediate predictions (for e.g. the beginning/middle of 2026) were made by relevant proponents and the relevant proponents acknowledged these predictions were falsified insofar as that ends up being the case. Note that even if intermediate predictions consistent with shorter timelines are falsified, that shouldn’t necessarily result in having (much) longer timelines because there might be other stronger updates pointing in favor of shorter timelines.

The full quote is: “I think we’ll be there in three to six months—where AI is writing 90 percent of the code. And then in twelve months, we may be in a world where AI is writing essentially all of the code.”

Because METR doesn’t directly measure time-horizon on internal engineering tasks relative to an AI company’s engineers (instead measuring this on their task suite with contractors for the human baseline), we need to convert these values. I’ve done this by multiplying by 0.5 which is my guess for the correspondence between these numbers for a given model, at least over the past 6 months or so. (This lines up with the initial value used for October of 1.5 hours for the 50% reliability time-horizon on internal tasks.) It’s pretty unclear how the correspondence between these numbers will change in the future.

And can also complete these projects around 30x faster than a small human team, making this a somewhat feasible benchmark to run.

As discussed earlier, when I say this, I mean “research engineers are as productive as if they operated 5x faster when doing research engineering work (including non-coding activities like meetings, though AIs don’t have to speed up all aspects of the job to achieve an overall acceleration of 5x)”. I don’t necessarily mean their output is 5x higher as this might (in some cases) depend on other inputs like compute.

Doing the decomposition into smaller tasks and then sampling might be messy. I’ll ignore these details and assume some reasonable option exists.

That said, for this timeline at the point of September 2026, AIs can usually acquire the relevant context quickly and easily.

A more general operationalization is: AIs can implement production-ready inference for a new (importantly different architecture) AI in the context of an existing codebase with existing examples and another implementation of this new AI (for different hardware) that can be probed, and these AIs can reach performance (and stability etc.) similar to a well optimized human implementation. The implementation would need to be mergeable as is, but wouldn’t necessarily need to handle actually being fully deployable.

Maybe we can operationalize this as: after humans have gotten the inference/training implementation basically working, and have implemented good correctness tests, the AI can improve the implementation by as much as an expert human would in a few days, in a PR that’s at least as mergeable as typical human PRs, more than half of the time.

The boost by AIs to engineering productivity is as good as an X times speed up to how fast engineers at the company work. Like, for 10x, it is as good as if all of the engineers thought, typed, talked, etc. 10x faster.

I’m using the same notion of AI R&D multiplier as AI 2027. As in, the pre-diminishing returns multiplier of algorithmic progress on top of the amount of compute and human labor at a given amount in time. Note that this isn’t a multiplier of overall AI progress which is also driven by scaling up compute and scaling up spending on data. I’m also basing these numbers on AI 2027 to a significant degree, though assuming somewhat more aggressive numbers based on Anthropic predicting a faster takeoff.

This is for randomly sampled engineering tasks within the company, when comparing to a typical/normal engineer who has the relevant skill set but who doesn’t have special context. I give both the 50% reliability number and the 90% reliability number.

It’s possible that Anthropic expects less acceleration in AI R&D from powerful AI (and full automation of AI R&D) and instead expects that the amount of additional effort required to go from “full automation of research engineering” to “powerful AI” isn’t that high. I’ve pulled in AI R&D acceleration numbers by assuming Anthropic thinks acceleration will be somewhat more aggressive than predicted by AI 2027 in keeping with their more aggressive forecast. I personally expect lower multipliers.

I tentatively think the gap between 50% and 90% reliability will increase when AIs are very capable and good long horizon agents but still have some things they can’t do. This is because there are many very long/large tasks that are at least somewhat specialized or that at least avoid potential weaknesses but when randomly sampling tasks a bunch of tasks will still touch upon weaknesses. (Another way to put this is that tasks will cluster some in the skill set required and many/most big tasks won’t be diverse enough to hit the AI’s weaknesses.)

It’s plausible that the 90% reliability time horizon for internal engineering tasks is actually substantially lower right now; I don’t have a strong view about this number and it might be driven by a pretty small fraction of tasks that current AIs are very bad at. I don’t think this makes a huge difference to the forecast either way.

This is my view after putting some weight on the views of people predicting very short timelines, including some weight on predictions from Dario/Anthropic

To be clear, this algorithmic breakthrough would be reasonably likely to involve scaling up some new type of training that requires collecting new types of data. (E.g., see recent advances in RLVR.) So while the breakthrough would have to be algorithmic to be fast enough, progress could also involve a bunch of data collection and scaling up training work that happens over some longer period of time smoothing out progress (though this would have to happen pretty quickly). In general, I’d guess that large breakthroughs tend to get smoothed out over some period due to iteration and figuring out how to best leverage the new thing.

It would be reasonable to start the trend with GPT-3.5 in which case the trend is around 3.5 years old or with GPT-2 in which case the trend is around 6.75 years old. (The measurements for GPT-2 and GPT-3 are more dubious, so I’m sympathetic to ignoring these models.)

There are also pretty good reasons to think that the rate of massive breakthroughs / paradigm changes that result in above trend progress should go down over time as the field of AI grows. This is via the law of large numbers; however, research progress being possibly heavy tailed complicates this argument.

Sonnet 3.5 was released 1.5 years ago and I’m calling that as the first AI that could do agentic software engineering a bit.

I think “accelerating engineering using AIs is as useful as making all engineers at the company >10x faster” is around 15% likely by the end of 2026.

It could be priced in for the time horizon trend because increasingly long tasks require being able to complete increasingly hard and diverse subtasks. See here for some discussion of this perspective. Even if it is already priced in, this does imply that you might only reach full automation of engineering at the point of surprisingly high time horizons (rather than seeing inherent superexponentiality in the trend). E.g., maybe you get full automation when the trend predicts AIs can reliably complete many year long tasks selected from a diverse distribution of messy tasks from within AI companies. This would be because this long time horizon corresponded to resolving the final last mile problems.

See here for discussion and the rest of the post for a broader argument like this.

I expect a bit lower than 1.5 hours, but the comparison is cleanest if I align the initial values between my prediction and use the same conversion factor.

Though we don’t necessarily know the conversion between performance on METR’s task suite (with relatively benchmarkable tasks) and performance on actual tasks within AI companies. These could differ in either the doubling time or the initial time-horizon. I’m also looking at 90% reliability while METR measures 80% reliability, though some fraction of tasks may be invalid/impossible meaning that 80%-reliability on METR’s task suite might be closer to something like 85% in practice.

By the end of 2026, METR’s task suite may no longer be meaningful due to not having enough hard tasks from a representative distribution. Regardless, we should be able to get a sense for how good AIs are at autonomous software engineering using other benchmarks and qualitative reporting.

In the median outcome, I expect to update towards longer timelines but this doesn’t violate conservation of expected evidence because there is some chance I update a lot towards much shorter timelines. (In other words, my update distribution is asymmetric.) See also Joe Carlsmith’s blog post about predictable updating.

However, I would still think that massive automation of engineering within AI companies (accelerating engineering by >5x) before 2029 is totally plausible and this could pose substantial risk of sabotage from misaligned AIs.

AI 2027 forecasts a ~1.25x AI R&D speedup for October while I think the current speedup is probably more like 1.1x or possibly lower. In terms of qualitative descriptions of capabilities, we seem somewhat behind though not that far behind.

We’re in the equivalent of 6/2025 in the AI 2027 trajectory which is 27 months before 9/2027 and we’re cutting off 10 months from this trajectory meaning the trajectory is (27-10) / 27 = ~0.6.

Um their model seems to be on track. What the hell

It’s worth noting that the progress so far, though it follows a task doubling time of 7 months (per METR), is not built on simple scaling laws. Therefore, it is perhaps not reasonable to expect continual progress just because research time passes. Since scaling laws in pre-training had been exhausted, reasoning tokens, agentic workflows, and scaling RL have provided the additional boosts. Most evidence point to these additional developments as having plateaus.

I am skeptical about using priors in a time series without considering the variables that have driven that performance increase to begin with.

I would expect the continual progress to reach a plateau by the end of 2025, unless another architectural breakthrough achieves scale.